This is how censorship centers work on Facebook

'The mechanical orange' was a Stanley Kubrick film famous for being banned in many countries, due to its violent images and an ambiguous message that many could misinterpret. In it, a young ultraviolent is captured by the authorities and exposed to a test to inhibit, in his personality, free will, that is, apply a method to make him a good person. How did they do it? by a shock treatment: the subject is tied in front of a giant screen, with the eyes permanently open, and is forced to see unpleasant scenes hours and hours. When he is released and feels the impulse to commit violent acts, the nausea happens to him, conditioned by the situation in which days before he had met.

This is how the censorship center works on Facebook

Now, imagine that you dedicate yourself to seeing violent, unpleasant images, hate content, insults, videos of beheadings … for eight hours a day for a large company. Your work in this center helps determine what others can and can not see. You work for one of the 20 centers that Facebook has arranged in the world to administer the censorship in relation to the content prohibited by the social network of Mark Zuckerberg. Eldiario.es has had access to sources of these centers in cities such as Warsaw or Lisbon, as well as documents that reveal the conditions in which these agents have to apply censorship.

According to these documents, agents of morality and good conduct on Facebook have to work on Deplorable conditions. Offices without windows in which hundreds of people must continually look at a screen where the grotesque content is happening in order to eliminate it and not expand. They are, like Alex in 'The mechanical orange', subjects exposed to terrible images for eight hours a day without being assessed, quite well, what may be the consequences of such an act. The algorithms we talk about so much are not people, but you and me.

Their physical and mental working conditions are extreme

They are, in total, 15,000 people who work in centers like that. Many of them assure that it is impossible to be 100% just, that in the conditions in which they work, and due to the nature of the task, failures can happen continuously. The workers of the censorship in Facebook charge, each, 700 euros per month and are subcontracted through international consultancies, being forbidden to tell anyone that they work for Facebook, always having to refer to this as' the client. Eldiario.es has obtained the statements of three employees of this type of center, having to maintain its anonymity.

The working conditions are extreme. They have half an hour to rest in their eight-hour day and they have to administer it for, every hour, take visual breaks, stretch their legs, go to the bathroom and even eat. In the room there is always a reviewer who notes and punishes inappropriate behavior: that an employee stops, while selecting images, to drink water or take the phone to consult something is a reason for sanction. In addition, the employees themselves are encouraged, through a points program, to accuse each other if they see any of their colleagues carrying out any sanctionable conduct.

What is your job?

The work works in the following way. The employee has a screen in front of him, where all the content that most complaints, on the part of the user, accumulate. The worker then has two 'Delete' options, with which he deletes the content, 0 'Ignore', if he considers that he is not breaking the Facebook rules. Each person can reach analyze up to 600 cases daily and they have, to press one of the two decision buttons, thirty seconds in total for each of them. Normal, of course, that injustices may be committed, taking into account all that is now known about these centers.

The newspaper puts examples of Real cases in which the employees interviewed had to intervene. For example, this image appeared, an illustration that alerts of breast cancer.

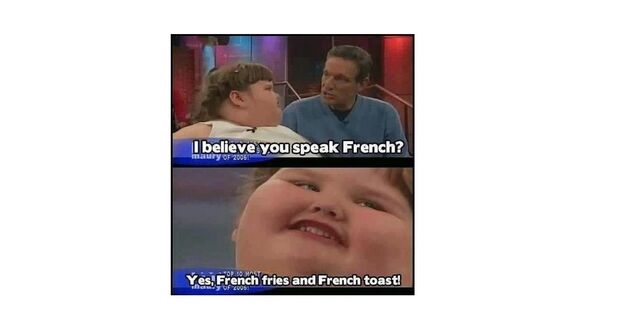

In this other we see how the work done by these moderators can be so complicated. Is it a meme that is an act of child harassment? The employee has to decide, in thirty seconds, whether to remove it or not. It is a capture of a real interview and obesity is not considered an incapacity. On Facebook nothing is deleted that could offend anyone except that someone belongs to a group of disabled people. In this case the moderator would choose the 'Ignore' button.

The contradictions of censorship on Facebook

Two examples that can be more or less clear but that are encompassed in many others where the ambiguity of the rules of Facebook makes an appearance. For example, the content that hints at Hitler is automatically deleted. However, the content in which Franco is advocated is allowed. One of the consulted reviewers affirms that fascism is allowed on Facebook. You can fill your wall of photos of Mussolini that nothing will happen. But Beware of putting Hitler. Or woman's nipples.

Among the most delirious decisions that Facebook censors have to take is to consider the Arab men's beard size to be if they are terrorists or not; In addition, the interviewees denounce a clear double standard by having to benefit specific groups. 'Insulting certain beliefs is allowed and others completely prohibited' For example, Zionism. Any calls for the boycott of Israel's products, due to the massacres that the country that carries out in Palestine, are censored. In addition, the likelihood of them eliminating content on Facebook has a lot to do with the power and organizational capacity of the group interested in it disappearing.

In the future it will remain to see psychological sequels that these Facebook employees will have as well as the peculiar ideological use that they give to content moderation.

Other news about … Facebook

Publicado en TuExperto el 2019-02-26 05:43:39

Autor: Antonio Bret

Visite el articulo en origen aqui